Wednesday, December 8, 2010

Quick flash back on MS SQL Server Code Names

SQL95 -----------------SQL Server 6.0

Hydra -----------------SQL Server 6.5

Sphinx ----------------SQL Server 7.0

Shiloh ----------------SQL Server 2000 (32-bit)

Liberty ---------------SQL Server 2000 (64-bit)

Yukon -----------------SQL Server 2005

Katmai/Akadia ---------SQL Server 2008

Kilimanjaro -----------SQL Server 2008R2

Denali ----------------SQL Server 2011

Monday, December 6, 2010

What's New in Report Builder 3.0

Collaboration and Reuse

Report Parts

Report parts are report items that you store on a report server, or on a SharePoint site that is integrated with a report server. You can reuse report parts in multiple reports, and you can update them on the server.

The report part that you add to your report maintains a relationship to the instance of the report part on the site or server by means of a unique ID. After you add report parts from a site or server to a report, you can modify them, independent of the original report part on the site or server. You can accept updates that others have made to the report part on the site or server, and you can save the modified report part back to the site or server, either adding a new report part or writing over the original.

Report parts let work groups utilize the different strengths and roles of their team members. For example, one person can create charts and save them as report parts that others can reuse in their reports.

Shared Datasets

Shared datasets are a new type of report server item that can retrieve data from shared data sources that connect to external data sources. A shared dataset provides a way to share a query to provide a consistent set of data for multiple reports. The dataset query can include dataset parameters. You can configure a shared dataset to cache query results for specific parameter combinations on first use or by specifying a schedule. You can use shared dataset caching in combination with report caching and report data feeds to help manage access to a data source.

Like report parts, a shared dataset that is added to a report maintains a relationship to the definition of the dataset on the report server. Unlike report parts, when the definition is changed, you do not have to accept updates. All reports that have the relationship always use the shared dataset definition on the report server.

Data Sources

Reporting Services supports three new data source types: Microsoft SQL Azure, Microsoft SQL Server Parallel Data Warehouse, and Microsoft SharePoint List.

SharePoint List Data Extension

The SharePoint list data extension lets you specify a SharePoint list as a data source. In your report, you can include data from a SharePoint list for the following versions of SharePoint sites: SharePoint Foundation 2010, SharePoint Server 2010, Windows SharePoint Services 3.0, and Office SharePoint Server 2007. The associated SharePoint List query designer provides a graphical interface to explore the metadata for all lists that you have sufficient permission to view. In each dataset query, you can select multiple fields from one SharePoint list.

Microsoft SQL Azure

The Microsoft SQL Azure data source type connects to SQL Server databases in the cloud and enable reports to retrieve and render report data from SQL Azure databases.

Microsoft SQL Server Parallel Data Warehouse

The Microsoft SQL Server Parallel Data Warehouse data source type connects to a Microsoft SQL Server Parallel Data Warehouse and enable reports to retrieve and render report data from SQL Server databases.

Data Visualization

Maps

Report Builder 3.0 provides a Map Wizard and Map Layer Wizard to add maps and map layers to your report to help visualize data against a geographic background. A map layer displays map elements based on spatial data from a map in the Map Gallery, from a SQL Server query that returns SQL Server spatial data, or from an Environmental Systems Research Institute, Inc. (ESRI) shape file. Map elements can be polygons that represent shapes or areas, lines that represent paths or routes, or points that represent locations such as stores or cities. You can also add a background that displays Bing map tiles.

After you relate map elements with report data, you can specify rules for the map elements on each layer that control color, size, width, or marker type. For example, you can add a bubble map that varies bubble size for store locations based on sales or a color analytical map that displays customer demographics for geographic regions.

You can add labels, legends, titles, a color scale, and a distance scale to help your users interpret the map display. You can add interactive features such as tooltips and drill-through links, or provide parameters that enable a user to interactively control the visibility of each layer.

Configuring a Map Layer

In a map report, you can use the map wizard to configure an existing map layer. You can change the type of map, the data visualization rules, and change match field that specifies the relationship between spatial data and analytical data. The Specify the match fields for spatial and analytical data wizard page displays data from both the spatial data source and the analytical data source to help you choose the field on which to match.

By default, reports that display a Bing map as a background use an HTTP connection. You can also specify whether to use a Secure Sockets Layer (SSL) connection to the Bing Maps Web server.

Sparklines and Data Bars

Sparklines and data bars are simple charts that convey a lot of information in a little space, often in-line with text. Sparklines and data bars are often used in tables and matrices. Their impact comes from viewing many of them together and being able to quickly compare them, instead of viewing them singly. This makes it easy to see the outliers. Each sparkline often represents multiple data points over time. Data bars can represent multiple data points, but typically illustrate only one. Each sparkline typically presents a single series. You cannot add a sparkline to a detail group in a table. Because sparklines display aggregated data, they must go in a cell that is associated with a group.

Sparklines and data bars have the same basic chart elements of categories, series, and values, but they have no legend, axis lines, labels, or tick marks.

Indicators

Indicators are minimal gauges that convey the state of a single data value at a glance. The icons that represent indicators and their states are visually effective, even when they are used in small sizes. Indicators can be used by themselves in dashboards or free-form reports, but they are most commonly used in tables or matrices to visualize data in rows or columns.

Indicators can show trends by using directional images such as arrows; ratings by using incremental icons such as stars; and states by using images such as traffic lights or check marks. Indicators are available in Report Builder 3.0 and Report Designer.

Aggregates and Expressions

Calculating Aggregates of Aggregates

You can create expressions that calculate an aggregate of an aggregate. For example, in a cell in the row group header that is associated with a group based on year, you can calculate the average monthly sales for year by using the expression =Avg(Sum(Fields!Sales.Value,"Month"),"Year").

By using this feature for charts and gauges that are nested in a table, you can align horizontal and vertical axes for charts and scales for gauges. You do this by calculating the maximum and minimum of aggregated values so the nested items use the same ranges.

Enhanced Support for Expressions

New global and a new property for report variables are introduced in this release.

The following additional items have been added to the built-in globals collection.

• OverallPageNumber and OverallTotalPages Support for page numbering for the entire rendered report. New properties that are related to page breaks control how the page numbering occurs over page breaks that are set on report items.

• PageName Support for naming pages.

• RenderFormat Support for information that is specified for the renderer.

Report variables now have a read-only property that is set by default. Advanced expression writers can clear this option to preserve the value of a report variable throughout a report session. This is the equivalent of setting the Writable property for a report variable in RDL. Report variables are still calculated once, and then recalculated every time the report is reprocessed. This property provides a way to persist data across report rendering, page changes, and some types of user interaction. The value of the variable is set when the report is reprocessed, but is maintained in the current session. In custom code, you can specify the variable value by using the SetValue function.

Report Layout and Rendering

Naming Excel Worksheet Tabs

Properties of reports and page breaks let you generate the names of worksheet tabs when you export reports to Excel. You can provide an initial page name of a report that can be exported as the default name of worksheet tabs, or use page breaks and page names to provide different names for each worksheet tab.

Rendering Reports to Data Feeds

The Atom rendering extension renders reports data as Atom-compliant data feeds. Depending on the layout of your report, the Atom rendering extension creates one or more data feeds. The data feeds can be exchanged and read by applications that can consume Atom-compliant data feeds. For example the PowerPivot client can use Atom-compliant data feeds that are generated from reports.

Using report data as data feeds gives you an additional way to provide data to applications when the data is not easily accessible through client data providers, or you prefer to hide the complexity of the data source and make it simpler to use the data. You can generate as Atom-compliant data feeds from Report Manager or a SharePoint site that is integrated with Reporting Services.

Report Pagination

Page breaks on tablix data regions (table, matrix, and list), groups, and rectangles give you better control of report pagination. Report pages, delimited by page breaks, can have different page names and reset page numbering. By using expressions, the page names and page numbers can be dynamically updated when the report is run. You can also disable page breaks entirely, or based on expression values.

Report Authoring Tools

Making it Easier to Design and Edit Reports

The run-time credentials, specified in the data source properties of a report, might not work for design time tasks such as creating queries and previewing reports. Report Builder 3.0 provides a user interface for changing credentials when it is unable to connect to the data source.

Rotating Text 270 Degrees

Text boxes can now be rotated 270 degrees. Standalone text boxes in a report header or footer, the report body, or text boxes in the cells of tables and matrices can display text written vertically and bottom to top. This rotation helps you create more readable reports, fit more columns on a printed report with fixed page size, and create reports with more graphical appeal.

Add image in the SQL Table

insert into ImageDB (description, img)

(

Select 'description1234', *

from OPENROWSET(BULK N'C:\Users\Public\Pictures\Sample Pictures\Desert.jpg', SINGLE_BLOB) as tempImg

)

Select * from ImageDB

Thursday, November 18, 2010

What’s New in R2 Report Manager

Report Builder is a ClickOnce application deployed via the browser. Users start by selecting report layout templates containing pre-defined data sections such as tables, matrices and charts. They drag and drop report items from the model to the design surface and set constraints to filter the report data. The model contains all of the necessary information for the Report Builder to automatically generate the source query and retrieve the requested data.

The Report Builder also allows users to:

• Add text and formatting to reports

• Create new fields and calculations defined using the model

• Preview, print and publish reports

• Export report data to formats such as Microsoft Excel

The latest edition of Microsoft's Report Builder tool provides plenty of data visualization capabilities not available with SQL Server 2008, with perhaps the coolest improvement being the ability to add maps displaying spatial and analytical data.

Version 3.0 also brings with it new capabilities for working with gauges and charts in SQL Server Reporting Services (SSRS), and while it is part of the R2 suite of tools, the tool can be downloaded separately to run with previous versions of SQL Server as well.

2008 R2:

Displays current location in the Browser’s title, removed the tabs, new look-and-feel, drop down list on items to get at additional options (without switching to details view), new icons.

New look-and-feel, last run instead of when run

New loading message and cancel option

Removed tabs, condensed the view to allow more space for viewing report, new icons,

New Export to Data Feed option (.atomsvc output)

New context menu on items to get at additional tasks such as Edit in Report Builder and Manage along with new Folder Settings option in the toolbar (no more properties tab)

New Manage option in the context menu to get at the properties and also includes the History and Subscriptions information now

Report Manager – Home Page

The new theme is easy on the eyes. When you hover over the items, whether it’s a folder or a file, there will be a contextual dropdown, Feels very much like SharePoint (2010).

Report Manager – Site Settings

On the Site Settings page, you have General Properties, Security and Schedule.

Report Manager – Data Source Properties

Data Source Properties page looks familiar, with the exception of Dependent Items. Dependent Items page will list all items (reports, data sets) that use this particular Data Source.

Report Manager – Search

Yes, you can now search in SSRS Report Manager! Type in your keyword, and away you go! It will find your report, your data set, your folder, or even your report part.

Report Manager – Report Contextual Dropdown

If you have worked with SSRS in Integrated Mode in SharePoint, the contextual dropdown in the revamped Report Manager will look familiar. When you hover over a report item and click on the down arrow, you will be able to move, delete, edit in Report Builder 3.0, and explore other properties, to name a few.

Report Manager – Report Properties

If you clicked on “Manage” in the contextual dropdown of a report item, you will be taken to this page. Note that on the left column, you have links for: properties, data sources, shared data sets, subscriptions, processing options, cache refresh options, report history, snapshot, and security.

Report Manager – Shared Data Set Properties

Finally there is a way to share data sets among reports!

Report Manager – Shared Data Set Caching Properties

And yes, you can also cache these shared data sets.

What’s New in R2 Report Server

New Feature List

New Report Server Architecture

This release features a redesigned server architecture that removes the dependency on Internet Information Services (IIS) while retaining all the server functionality of earlier versions. To replace the functionality previously provided by IIS, Reporting Services now includes native support for HTTP.SYS and ASP.NET, URL management for site and virtual directory names, a new authentication layer, and health monitoring through new memory management features. In addition, the new architecture consolidates the Report Server Web service, Report Server Windows service, and Report Manager into a single service. In SQL Server 2008, you only have to define and manage one service account for all report server applications.

Enhanced Toolset for Report Server Configuration and Management

This release includes changes to the server tools. A report server is configured and managed through a combination of tools that include the Reporting Services Configuration tool, SQL Server Management Studio, Report Manager for a native mode report server, and SharePoint application pages for SharePoint integrated mode. In this release, each tool supports a specific purpose and focus. Support for specific tasks have been added to some tools and removed from others.

New Support for Data-driven Subscriptions and Job Management in SharePoint Integrated Mode

This release adds support for data-driven subscriptions and job management on report servers configured for SharePoint integrated mode.

New Report Server Architecture

Report server architecture is fundamentally changed in SQL Server 2008 by the removal of Internet Information Services (IIS) dependencies and the consolidation of applications into a single service. The new architecture embeds HTTP server capabilities into the report server itself, allowing you to run a report server as a true a middle-tier application, separate from Web front-end applications used to access it. The report server also includes a new hosting layer to authenticate users, manage memory and internal processes, and support end-to-end tracing and logging.

Native support for HTTP.SYS and ASP.NET

Reporting Services no longer uses Internet Information Services (IIS) to gain access to ASP.NET functionality, the Report Manager application, or the Report Server Web service endpoint. In SQL Server 2008, Reporting Services does the following:

• Hosts the ASP.NET and Microsoft .NET Framework technologies that are built into SQL Server CLR.

• Leverages the HTTP.SYS capabilities of the operating system.

Native support for URL reservations and registration of the report server endpoints

The report server includes an HTTP listener that accepts requests that are directed to a URL and port that you define during server configuration. URL reservations and registration is now managed directly by the report server through HTTP.SYS.

Consolidated services and applications

This release combines the Reporting Services server applications into a single service. The following server applications run within a single service: the Report Server Web service for interactive report processing, the Report Manager front-end component, and the Scheduling and Delivery processor (a background processing application for scheduled operations).

Consolidating the server applications into a single service reduces configuration and maintenance tasks, and provides a better platform for integrating custom functionality. Although consolidation simplifies deployment, it does not reduce the Reporting Services features you are accustomed to using or affect how you access the applications. All functionality continues to be available. Both Report Manager and the Report Server Web service run interact within the single service. Both applications continue to be available through URLs that provide HTTP access to each one.

Authentication layer

In the previous release, authentication was handled through IIS. In this release, Reporting Services handles all authentication requests through a new authentication subsystem that supports Windows-based and custom authentication.

Memory configuration

New memory management features enable you to set a memory threshold for report processing. In previous releases, the report server used all available memory. In this release, you can configure a maximum limit on memory as well as interim thresholds that determine how the report server responds to changes in memory pressure.

End-to-end logging

New HTTP logging keeps a record of all HTTP requests handled by the report server. It is equivalent to the log file that is generated by IIS.

Trace log files are consolidated into a single ReportServerService_

Enhanced Toolset for Report Server Configuration and Management

Reporting Services includes a complete set of tools for configuring and managing a report server installation. The tools have been redesigned to support a distinct set of tasks that no longer overlap in functionality. New capabilities are added to some tools, whereas other capabilities have been removed to eliminate redundant tasks.

SQL Server Management Studio

For report servers that run in native mode or in SharePoint integrated mode, this tool is used to enable features, set server properties and defaults, create shared schedules, configure role definitions (or view permission levels on a SharePoint site), and manage scheduled jobs that are currently in progress on the report server.

This tool is no longer used to manage the folder hierarchy or report server content. You cannot use this tool to assign permissions, nor can you manage reports, models, resources, shared data sources, or data-driven subscriptions. All content management is through Report Manager or a SharePoint site.

Report Manager

For a native mode report server, Report Manager is now the only tool that you use to view and manage report server content.

Assigning permissions, creating and managing report server items, scheduling report and subscription processing, managing report delivery, and generating and managing models are handled exclusively through this tool. New pages are provided for generating models, setting model item security, and associating clickthrough reports to entities in a model.

To use Report Manager, you must define a URL to the application. You can effectively disable Report Manager by not creating the URL in the first place. If you installed Reporting Services in the default configuration, the URL is already created and you must delete it if you decide to turn the application off.

If you configure the report server to run in SharePoint integrated mode, Report Manager is turned off. You cannot use Report Manager on a report server that runs in SharePoint integrated mode, even if you previously configured the URL.

Reporting Services Configuration tool

The Reporting Services Configuration tool is used to set the service account, create or manage the report server database, configure URLs, set the unattended execution account, configure report server e-mail, and manages encryption keys. The tool includes new pages for defining URLs and a revised workflow for creating and configuring a report server database or updating a service account.

New Support for Data-driven Subscriptions and Job Management in SharePoint Integrated Mode

This release of Reporting Services introduces data-driven subscriptions and job management for report servers running in SharePoint integrated mode. You can define data-driven subscriptions for reports that you have deployed to a SharePoint library by using the new subscription definition pages that are provided by the Reporting Services Add-in for SharePoint Technologies. The add-in also provides new job management pages at the site level to view and cancel reports that are in progress. Note that you must install or upgrade to the Reporting Services Add-in to access the new report server functionality from your SharePoint site.

Wednesday, November 17, 2010

SSIS Logging

E.g.:- Consider the following scenario: a package has been developed in the development environment, tested it in test environment and finally deployed it onto the production server. In the beginning everything was fine, with the package running as expected. Sometime later the package execution failed each time upon being run. In this situation there is commonly uncertainty regarding what is causing the package to fail. Hence, logging is very useful. So what is event logging is and how does it help understand problems encountered during runtime.

Integration Services includes logging features that write log entries when run-time events occur and can also write custom messages. We use the logging to log the information about the event happening at the run-time for auditing and troubleshooting purpose.

SSIS package logging can log the information on one or more different selected log providers as mentioned below:

• SSIS log provider for Text files – logs the information into a text file, during configuration the location and file name need to be specified.

• SSIS log provider for Windows Event Log – logs the information into the Windows Log Event under Application node and SQLISPackage90/ SQLISPackage100 as source. Writes each event to the Windows Application log - What makes this choice a nice one is it creates hooks for monitoring products like Microsoft Operations Manager (MOM) to use for alerts. i.e. In windows 7 StartMenu --> Run --> Event Viewer --> Windows Logs --> Application

• SSIS log provider for XML files – logs the information into the XML file, of which the location and file name are specified during configuration.

• SSIS log provider for SQL Profiler – creates a *.trc file which can be opened in SQL Profiler to analyses further. Create a profiler trace file - This option gives you some interesting ties in with Profiler if you're examining performance issues like long-running packages. With this seamless integration, you can quickly tie the log to a System Monitor trace to see performance counters

• SSIS log provider for SQL Server – logs the information into sysdtslog90/ sysssislog table of msdb database that requires a connection manager to be provided during configuration. Creates a table Points to the location where the connection manager points to the database in SysTables-->Sysssislog table.

Example: To enable Logging, right click on the package and select logging or or Go to the menu SSIS and select logging: A logging configuration window will pop-up.

Containers Tree view on Left-side – displays the hierarchical view of a package and all its executable (containers and tasks). Select the executable to enable for logging. Providers and Log Tab - Select one or more different log providers from the combo box and click the Add button on the right as shown below, two log providers have been selected; one to write to text file and the other to write to Windows Event Log. You can configure the file location.

Details Tab – This tab displays all the events of the selected executable, select which events to log. For example: below OnError and OnTaskFailed event have been selected at the package level. After the package has run the result of logging can be seen.

Sunday, November 14, 2010

Report Model Overview

Ad hoc report authoring is a complex task that does not fit a traditional business profile. Information workers know what information they need and how their report should look, but they usually don’t have the technical skills necessary to create a rich report design, to understand real-world database schemas, or to use formal query languages such as SQL and MDX. In contrast, IT staffers understand report design, the technical aspects of the data source schema, and formal query languages, but they are not available on demand to analyze, design, and deploy urgent or one-time reports. Therefore, businesses need a reporting solution that enables information workers to create reports on demand and that speeds up simple report generation by IT staff. The appropriate solution should cover two objectives:

• Make physical data source schemas easier to understand and utilize.

• Simplify the overall report-authoring experience for non-technical employees.

You can fulfill the first requirement by creating a metadata layer on top of the database schema and preparing it to present the most-frequently requested data in the most intuitive and accessible manner by using business taxonomy. The metadata layer will then translate report design, filtering, and parameters into the correct and optimized native queries against the underlying physical data source.

The second requirement is met by combining query design and layout design into simplified, high-level user actions. Report authoring using Report Builder starts with a predefined report template, which modifies the query and report layout together as the user adds or removes fields, groups, and subtotals. Templates drive the task of setting report layout, adding some uniformity to reports produced by different users.

SQL Server 2008 Reporting Services addresses these two requirements with two new technologies known as report models and Report Builder. The metadata layer is implemented in Semantic Model Definition Language (SMDL) models and the Semantic Query Engine. The technology to simplify report authoring is provided by the Report Builder application. In the following section you will learn more about these tools and technologies.

Ad Hoc Reporting Components

The following components comprise the ad hoc reporting functionality in Reporting Services.

• Report Builder client (end-user report creation tool).

• Report model (abstraction layer that provides business context to the end user).

• Model Designer (design tool used to create the report model).

• Semantic Query Engine (processor that generates data-source specific queries and accesses relational and multidimensional data sources when necessary).

• The report models and the user-created reports that are deployed on a report server and managed as standard report server objects.

Report Model

The report model is the metadata layer component that serves as the basis for building reports. Report designers, both end users and IT staff, will use the report model as the data source for the report instead of directly accessing a relational database or OLAP cube data source when designing reports. The report model contains three key elements necessary for the ad hoc reporting process to function smoothly: bindings to the underlying data-source schema, a logical representation of the schema in the form of business entities that are familiar to end users, and the default information to be used according to the role that the entity plays in the report (primary entity, lookup entity, summary, and so on). A report model definition is an XML file that follows the Semantic Model Definition Language (SMDL) specification. Therefore, report models are also referred to as semantic models, SMDL, or SMDL models.

Report Model Components

Report models primarily consist of the following three item types:

• Entities are the equivalent of objects or tables in a database. Entities are composed of attributes and roles.

• Attributes are the equivalent of object properties or columns in a database table.

• Roles are the equivalent of object references or relational foreign keys and join operations.

Additionally, report models incorporate some organization functionality, allowing administrators to create folders (containers) and perspectives (sub-models). Consequently, report models for larger data sources can be more focused for their intended audience.

Semantic Model Definition Language

SMDL is an XML specification that defines the items allowed in a report model file. The report model file extension is ".smdl". SMDL defines items similar to objects, attributes, and references that are stored in collections whose root ancestor is the Semantic Model object itself.

Model Designer

You can create report models manually or generate them automatically. SQL Server 2008 provides three tools for generating report models: Model Designer, Report Manager, and SQL Server Management Studio. Model Designer is an editing environment, embedded in SQL Server Business Intelligence Development Studio, that lets IT staff (development and/or operations) generate a draft report model from a relational data source and then refine it to match actual user reporting needs. Report Manager and SQL Server Management Studio can automatically generate a report model from a SQL Server or Oracle relational data source or an Analysis Services multidimensional data source. However, you cannot use these tools to customize a report model after it has been generated. You can use Model Designer to customize models generated from relational data sources, but not from Analysis Services data sources.

Semantic Query Engine

The report model maps the data used in report definition to the schema in the data source. This mapping serves two purposes: first, it gathers the data to display in the report, and second, it filters the data request according to default filters, security filters, and report parameters.

The Semantic Query Engine’s job is to build and execute the appropriate queries against the SQL Server relational database or Analysis Services cube in Transact-SQL or MDX, respectively.

Report Builder

Report Builder is a client tool that lets users create, edit, view, and save report definitions from report models. Report definitions are stored as XML files that follow the Report Definition Language (RDL) specification, which is the same specification that all Reporting Services reports use. Thus, the only difference between reports designed with Report Builder and those designed with other tools is that the former ones always use a report model as the data source, while the later ones may or may not use a report model. Report Builder is a Microsoft .NET Framework application deployed dynamically via Click Once technology to the client. It requires the .NET Framework version 2.0 to be deployed on the client workstation. When a user starts Report Builder, it is installed automatically from the report server to a client workstation. The application is cached locally on the client workstation, updating automatically when a new version is deployed to the report server.

Report Models from SQL Server Relational Databases

You can create and edit an SMDL model from scratch by creating entities and adding attributes and roles to them. Both entities and attributes bind to specific fields in the underlying schema. However, you can accelerate this task by using the model-generation options provided by Reporting Services. You can use either the Model Designer to manually create a report model, or you can use Reporting Services management tools to automatically generate and publish a model. The Report Model Wizard, invoked from the Model Designer, creates a draft model and provides a user interface for specifying rules for model generation, whereas the automated process uses predefined rules.

Create a New Report Model Project

Report Model Projects contain a Data Source definition file, a Data Source View definition file, and a Model definition file. We will gain exposure to each of these components in the respective section of the procedure that follows.

1. Click the Start button.

2. Select Microsoft SQL Server 2008 within the Program group of the menu.

3. Click SQL Server Business Intelligence Development Studio Close the Start Page tab.

5. Select File --> New on the Visual Studio main menu.

6. Select Project from the cascading menu

7. Select Business Intelligence Projects in the Project types pane of the dialog.

8. Select Report Model Project in the Templates pane

NOTE: The templates that appear in your template pane may differ, depending upon which SQL Server 2008 components are installed in your environment, as well as whether additional templates (for Business Intelligence Projects or other types of projects that can be created) have been defined in Visual Studio.

9. Change the project Name (currently displaying a default) to the following:

The Solution Name changes to match the project Name by default.

10. Navigate to a convenient location to store the Project and Solution files, modifying the Location box accordingly (Visual Studio will create a directory based upon our input here).

11. Click OK to accept our input and to create the new Report Model Project.

The New Project dialog closes, as Visual Studio creates the project. Both solution and project RS028 Intro Report Model appears in the Solution Explorer

Solution Explorer presents a tree view of the objects contained in the solution, the primary management unit within the Business Intelligence Development Studio, which can contain multiple projects. Individual projects, such as the one we have created, themselves contain folders for the objects that can be defined for projects of a similar type.

As we can see in the present instance, the Report Model Project template, upon which our RS028 Intro Report Model Project was based, contains the following folders:

• Data Sources

• Data Source Views

• Report Models

Define a Data Source

Defining a Data Source, as we have discovered in past articles, is typically the first step we take with most BI Projects – be they Analysis Services, Report Model, or other varieties. A project can have multiple Data Sources, within which we are essentially defining the connection string used to connect to the actual source of our data. While we can define connections to source databases on remote computers in the business environment, we will, in this article, be working with one of the sample databases that are available to us when we install MSSQL Server 2008, the AdventureWorks database.

Let's create a Data Source within our new RS028 Intro Report Model Project by taking the following steps:

1. Right-click Data Sources folder within the Solution Explorer.

2. Select Add New Data Source from the context menu that appears

The Data Source Wizard appears, opening with the Welcome to the Data Source Wizard page

3. Click Next.

The Select how to define the connection page appears. This page allows us to define a Data Source based upon a new or existing connection. (It also allows us to base a Data Source upon a connection that has been previously created, as long as the existing Data Source definition exists within the same project, within another project housed in the same solution, or from within another Report Model Project.) Select how to define the connection page appears. 4. Ensuring that the radio button to the left of Create a data source based on an existing or new connection is selected, click New. The Connection Manager dialog appears.

5. Ensure that .Net Providers\SqlClient Data Provider is selected in the Provider selection box atop the Connection Manager dialog.

6. Type \ select the appropriate server name / server name with instance into the Server name box.

We can substitute "localhost" or "localhost\

7. Select / complete the appropriate authentication choice for your environment in the section labeled Log on to the server (I selected the radio button to the left of Use Windows Authentication in the lab environment in which I prepared this article).

8. Select AdventureWorks in the Select or enter a database name selector box, within the Connect to a database section of the dialog.

The Connection Manager dialog appears

9. Click the Test Connection button (in the bottom left corner of the Connection Manager dialog) to ascertain that we have a valid connection.

We receive a positive confirmation message, Test connection succeeded, assuming settings in the dialog are in accordance with the above steps, as appropriate for our own environments. The confirmation message box appears

10. Click OK to dismiss the message box and return to the Connection Manager dialog.

11. Click OK on the Connection Manager dialog to accept our settings and create the data connection.

We return to the Select how to define the connection page of the Data Source Wizard

12. Click Next.

The Completing the Wizard page appears.

13. Replace the name that appears in the Data source name box with the following:

RS028_Adventure Works

The Completing the Wizard page appears

14. Click Finish to create the new Data Source, and to dismiss the Data Source Wizard.

The Wizard closes, and the new Data Source appears in the Data Sources folder within the Solution Explorer

Having created a Report Model Project, and defined a Data Source, we are ready, to define a Data Source View.

Define a Data Source View

Our next steps surround the creation of the Data Source View, a procedure that is customary at this stage in both Analysis Services and Report Model Projects. It is important to realize, as we work with a "live" data connection that we have defined, that we could certainly continue our development efforts with the metadata without an open connection. The Data Source View provides a single, unified view of the metadata from the tables and views that concern us within our project.

To define a Data Source View, we will take the following steps:

1. Right-click Data Source Views folder within the Solution Explorer.

2. Select Add New Data Source View from the context menu that appears

The Data Source View Wizard appears, opening with the Welcome to the Data Source View Wizard

3. Click Next.

We arrive at the Select a Data Source page, where we see the Data Source we created in the previous section, RS028_Adventure Works, in the Relational data sources list box on the left side of the page. Our newly created Data Source is positioned as the default, and will serve us in meeting the objectives of our practice exercise. A Data Source View for a Report Model Project, unlike a Data Source View for an Analysis Services Project, can only reference a single Data Source.

4. Click Next.

We arrive at the Select Tables and Views page, where we see the various tables of the Adventure Works data source appear in the Available objects list box on the left of the page.

5. Select all tables in the Available objects list, by clicking the button marked ">>"

The tables in the Available objects list box move to the included objects list on the right half of the page

6. Click Next.

The Completing the Wizard page appears.

7. Replace the name that appears in the Data source name box with the following:

RS028_Adventure Works

The Completing the Wizard page appears

8. Click Finish to create the new Data Source View, and to dismiss the Data Source Wizard.

The Wizard closes, and the new Data Source View appears in the Data Source Views folder within the Solution Explorer. We have defined a Data Source View that contains the metadata for the entire Adventure Works sample database. Naturally, we might have only entrained select tables, based upon the business requirements of the intended audience of the model, but for purposes of our practice session, this will serve to illustrate the concepts. We will next define our Report Model based upon some of this metadata, which designated information consumers can then use within the Report Builder.

Define the Report Model

Report Models themselves represent an abstract layer, as we noted earlier, within which we can create business-based entities from which consumers can select report items. Let's create a Report Model next, and then discuss publishing it for consumer use.

1. Right-click the Report Models folder within the Solution Explorer.

2. Select Add New Report Model from the context menu that appears

The Report Model Wizard appears, opening with the Welcome to the Report Model Wizard page

3. Click Next.

We arrive at the Select Data Source View page, where we see RS028_Adventure Works, the Data Source View we created in the previous section, displayed within the Available data source views list box

Our newly created Data Source View is positioned as the default, and will serve us in the creation of our new Report Model.

4. Click Next.

We arrive at the Select report model generation rules page, where we see the rules upon which automatic metadata generation will be based. For purposes of the current session, we will allow selection of all the defaults

5. Click Next.

The metadata is combined into entities. The Collect Model Statistics page appears next.

6. Ensure that the checkbox to the immediate left of Update model statistics before generating is selected, to ensure that database statistics upon which the Report Model depends are updated from the Data Source View

7. Click Next.

We arrive at the Completing the wizard page, where we see that, by default, the Report Model has been given the same name as the underlying Data Source View, RS028_Adventure Works

8. Click Run.

The statistics update occurs, and then the rules that were selected on the Select report model generation rules page are applied within the Report Model creation process. After all passes are completed, the status messages come to an end, and the Finish button becomes enabled

9. Click Finish.

the new Report Model appears in the Report Models folder, within the Solution Explorer

Moreover, we see that the entities within the new Report Model appear on the new Report Model tab of the Designer

10. Click the Address entity on the Report Model tab, in the left Model tree.

We note that a list of fields, folders and roles (where appropriate), for an entity we select, is displayed in the middle pane of the tab. Moreover, properties for the selected entity appear in the Properties pane, as is the general case with objects throughout the Visual Studio environment.

We can define new folders, source fields, expressions, roles and filters to further add to the richness of our Report Model. We do this by simply right-clicking within the pane, selecting New, and then selecting the object type from the cascading menu

Publish the Report Model Project

We "deliver" our design efforts to the information consumers by publishing the Report Model Project to a report server. We will publish the components of our project by taking the following steps.

1. Ensure that the Report Server information is properly configured for the project, by right-clicking the new RS028 Intro Report Model Project in Solution Explorer.

2. Select Properties from the context menu that appears

The Properties page appears, with the report server URL specified within the TargetServerURL property setting

By default, the Business Intelligence Development Studio general setting is as shown above, http://localhost/reportserver. Once we have the report server aligned as we desire, deployment is a simple exercise.

3. Make changes as appropriate to the report server URL within the TargetServerURL property setting.

4. Click OK to accept changes, and to dismiss the Properties page.

5. Right-click the RS028 Intro Report Model project in Solution Explorer, once again.

6. Click Deploy from the context menu

The Model Project uploads successfully to the report server (we can view it via Report Manager to verify the upload). To make the new model available to our information consumers, we must provide access via role assignments. We will take up these steps for using Report Builder, as well as the details of various in-depth techniques and nuances in Report Model design, in separate articles within the MSSQL Server Reporting Services series.

Thursday, November 4, 2010

Import Export data between Excel and SQL Table

Tuesday, November 2, 2010

Table Type Variable and Temporary Table

DECLARE @Cars table

(

Car_id int NOT NULL,

ColorCode varchar(10),

ModelName varchar(20),

Code int,

DateEntered datetime

)

INSERT INTO @Cars (Car_id, ColorCode, ModelName, Code, DateEntered)

VALUES (1,'BlueGreen', 'Austen', 200801, GETDATE())

SELECT Car_id, ColorCode, ModelName, Code, DateEntered FROM @Cars

Temporary Table:

CREATE TABLE dbo.#Cars

(

Car_id int NOT NULL,

ColorCode varchar(10),

ModelName varchar(20),

Code int,

DateEntered datetime

)

INSERT INTO dbo.#Cars (Car_id, ColorCode, ModelName, Code, DateEntered)

VALUES (1,'BlueGreen', 'Austen', 200801, GETDATE())

SELECT Car_id, ColorCode, ModelName, Code, DateEntered FROM dbo.#Cars

DROP TABLE dbo.[#Cars]

•Temporary Tables are real tables so you can do things like CREATE INDEXes, etc. If you have large amounts of data for which accessing by index will be faster then temporary tables are a good option.

•Table variables don't participate in transactions, logging or locking. This means they're faster as they don't require the overhead, but conversely you don't get those features. So for instance if you want to ROLLBACK midway through a procedure then table variables populated during that transaction will still be populated.

•Temp tables might result in stored procedures being recompiled, perhaps often. Table variables will not.

•You can create a temp table using SELECT INTO, which can be quicker to write (good for ad-hoc querying) and may allow you to deal with changing datatypes over time, since you don't need to define your temp table structure upfront.

•You can pass table variables back from functions, enabling you to encapsulate and reuse logic much easier (eg make a function to split a string into a table of values on some arbitrary delimiter).

For example:

BEGIN TRAN

declare @var table (id int, data varchar(20) )

create table #temp (id int, data varchar(20) )

insert into @var

select 1, 'data 1' union all

select 2, 'data 2' union all

select 3, 'data 3'

insert into #temp

select 1, 'data 1' union all

select 2, 'data 2' union all

select 3, 'data 3'

select * from #temp

select * from @var

ROLLBACK -- Temp table scope over here

select * from @var

select * from #temp

--OR--

BEGIN TRAN

declare @var table (id int, data varchar(20) )

create table #temp (id int, data varchar(20) )

insert into @var

select 1, 'data 1' union all

select 2, 'data 2' union all

select 3, 'data 3'

insert into #temp

select 1, 'data 1' union all

select 2, 'data 2' union all

select 3, 'data 3'

select * from #temp

select * from @var

GO -- Here Table type Variable scope will over

select * from #temp

select * from @var

There are many more differences between Table type variable and Temporary table

Difference between OLTP and OLAP

OLAP: (Data warehouse application -- MOLAP, ROLAP, HOLAP)

Difference:

OLTP

Current data

Short database transactions

Online update/insert/delete

Normalization is promoted

High volume transactions

Transaction recovery is necessary

Less Index

More Joins

Adopts an entity relationship(ER)

Customer-oriented, used for data analysis and querying by clerks, clients and IT professionals

OLAP

Current and historical data

Long database transactions

Batch update/insert/delete

Denormalization is promoted

Low volume transactions

Transaction recovery is not necessary

More Index

Less Joins

Adopts star, snowflake or fact constellation model and a subject-oriented database design

Market-oriented, used for data analysis by knowledge workers( managers, executives, analysis)

--OR--

Online transactional processing (OLTP) is designed to efficiently process high volumes of transactions, instantly recording business events (such as a sales invoice payment) and reflecting changes as they occur.

Online analytical processing (OLAP) is designed for analysis and decision support, allowing exploration of often hidden relationships in large amounts of data by providing unlimited views of multiple relationships at any cross-section of defined business dimensions.

--OR--

OLTP System (Operational System):

Operational data; OLTPs are the original source of the data.

To control and run fundamental business tasks

Reveals a snapshot of ongoing business processes

Short and fast inserts and updates initiated by end users

Relatively standardized and simple queries Returning relatively few records

Typically very fast

Can be relatively small if historical data is archived

Highly normalized with many tables

Backup religiously; operational data is critical to run the business, data loss is likely to entail significant monetary loss and legal liability

OLAP System (Data Warehouse):

Consolidation data; OLAP data comes from the various OLTP Databases

To help with planning, problem solving, and decision support

Multi-dimensional views of various kinds of business activities

Periodic long-running batch jobs refresh the data

Often complex queries involving aggregations

Depends on the amount of data involved; batch data refreshes and complex queries may take many hours; query speed can be improved by creating indexes

Larger due to the existence of aggregation structures and history data; requires more indexes than OLTP

Typically de-normalized with fewer tables; use of star and/or snowflake schemas

Instead of regular backups, some environments may consider simply reloading the OLTP data as a recovery method

--OR--

OLTP:

Current data

Short database transactions

Online update/insert/delete

Normalization is promoted

High volume transactions

Transaction recovery is necessary

OLAP:

Current and historical data

Long database transactions

Batch update/insert/delete

Denormalization is promoted

Low volume transactions

Transaction recovery is not necessary

Thursday, October 28, 2010

How to set SSRS Subscriptions

Report subscriptions are processed on the Report Server. They reach end users through delivery extensions that are deployed on the Report Server.

There are two kinds of subscriptions:

• Standard /Event-Driven Subscriptions

• Data-Driven Subscriptions

Standard subscriptions are created and managed by individual users. A standard subscription consists of static values that cannot be varied during subscription processing.

Data-driven subscriptions are dynamic in that the presentation, delivery, and parameter values are retrieved at run time from a data source. You might use data-driven subscriptions if you have a very large recipient list or if you want to vary report output for each recipient. To use data-driven subscriptions, you must have expertise in building queries and an understanding of how parameters are used. Report server administrators typically create and manage these subscriptions

Subscriptions use delivery extensions to determine how to distribute a report and in what format. When a user creates a subscription, he or she can choose one of the available delivery extensions to determine how the report is delivered. Reporting Services includes support for e-mail delivery and delivery to a file share. Developers can create additional delivery extensions to route reports to other locations. Another delivery method is called the null delivery provider. This method is not available to users. Null delivery is used by administrators to improve report server performance by preloading the cache. Subscription information is stored with individual reports in a report server database. You cannot manage subscriptions separately from the report to which they are associated.

E-Mail Delivery in Reporting Services:

Reporting Services includes an e-mail delivery extension that provides a way to e-mail a report to individual users or groups. The e-mail delivery extension is configured through the Reporting Services Configuration Manager tool and by editing the Reporting Services configuration files.

Select the Email Settings--> Sender Address, Current SMTP Delivery Method and SMTP Server.

To distribute or receive a report by e-mail, you define either a standard subscription or a data-driven subscription.

Note: You can subscribe to or distribute only one report at a time. You cannot create a subscription that delivers multiple reports in a single e-mail message. You click the “New Subscription” button, ready to go add an email address.

An E-Mail will be generated and sent to the email addresses found in the recipients’ field.

To:, Cc: and Bcc: – fairly standard fields. You can enter the alias if you’re sending to an internal address, and must type the full email address if sending to an external address.

Reply-To: – The email will be sent from the address specified in SQL Server Reporting Services Configuration Manager on the server. However, if you would like replies to be redirected, simply enter a different address. I have a generic email , reports@company.com, that all reports are sent from.

Subject: – what will appear in the subject line of the email. This can be edited to anything you would like. The two parameters provided, @ReportName and @ExecutionTime, are the only parameters that are allowed in this field.

Include Report – This option, if checked, will attach the report in the format you specify. Consider the size of the report, and the format you want to send it in, when choosing this option. If you’re going to be sending a large report, consider mail size limits on the recipient’s mail server. You may need to send a link, instead.

Render Format – If you have chosen to include the report, you can pick a format here. The formats you can pick from vary based on your version of Reporting Services.

• XML file with report data – the user receives an .xml file as an attachment.

• CSV (comma delimited) – the user receives a .csv file as an attachment.

• Acrobat (PDF) file – the user receives a .pdf file as an attachment.

• MHTML (web archive) – the user receives the report embedded in the body of the email, rather than as an attachment. (I was really excited when I discovered this!)

• Excel – the user receives an .xls file as an attachment.

• TIFF file – the user receives a .tiff file as an attachment.

• Word – the user receives a .doc file as an attachment. This is new in 2008.

Include Link – This option, if checked, will embed a link to the report in the body of the email. Note that if you do this, the report parameters will be embedded in the link.

Priority: – This sets a priority of High, Normal or Low for Outlook. High is the little red exclamation point, and Low is the little blue down arrow.

Comment: – This field controls what will be in the body of the email. Comments will appear above the report, if you selected MHTML, and above the link, if you chose to include it.

Schedule the subscription by clicking Schedule Details: Here you can select hourly, daily, weekly, monthly and many more options. Ok and here now your subscription is created. Wait for the particular time which you schedule.

Another place where you can see the subscription delivery is in the Microsoft SQL Server Management Studio under SQL Server Agent under Jobs Activity monitor: Connect to SQL Server --> SQL Server Agent -->Job Activity

Windows File Share Delivery in Reporting Services:

Reporting Services includes a file share delivery extension so that you can deliver a report to a folder. The file share delivery extension is available by default and requires no additional configuration. To distribute a report to a file share, you define either a standard subscription or a data-driven subscription. You can subscribe to and request delivery for only one report at a time. When defining a subscription that uses file share delivery, you must specify an existing folder as the target folder. The report server does not create folders on the file system. The folder that you specify must be accessible over a network connection. When specifying the target folder in a subscription, use Uniform Naming Convention (UNC) format that includes the computer's network name. Do not include trailing backslashes in the folder path. The example illustrates a UNC path: \

File Name: – This is the name the file will be saved as. Just as a note, you can’t use the same parameters that you can with email. I’ve tested it, and they just appear as @ReportName and @TimeExecuted in the file name. You can, however, use the parameter @timestamp. This will add the time the report was saved to the file name. This is useful for creating uniquely named files.

Add a file extension when the file is created – If this is selected, the file will be assigned a 3-letter extension corresponding to the file format you select. If this is not selected, the 3-letter extension isn’t assigned to the file, although it will still open in the selected format.

Path: – The location on the network the file will be saved to. The folder must already exist – Reporting Services won’t create it. Also, you must use the UNC format: file://servername/foldername/subfolder.

Render Format: – The file format the report will be saved in. The formats you can pick from vary based on your version of Reporting Services.

• XML file with report data – the report is saved as an .xml file.

• CSV (comma delimited) – the report is saved as a .csv file.

• Acrobat (PDF) file – the report is saved as a .pdf file.

• HTML 4.0 – the report is saved as an .html file. Note: if there are images in your report, they will not show in this format.

• MHTML (web archive) – the report is saved as a .mhtml file.

• Excel – the report is saved as an .xls file.

• RPL Renderer – the report is saved as an .rpl file. What is .rpl? RPL (Report Page Layout Format) is a rending format used in newer Reporting Services version for forcing the client to share some of the workload of rendering viewer controls. This enhances performance in the loading process of reports

• TIFF file – the report is saved as a .tif file.

• Word – the report is saved as a .doc file.

Credentials used to access the file share: – This must be a network account, with access to the folder you are saving the file to.

Overwrite options: – These are options for what will happen if the report has previously been saved to the network location.

• Overwrite an existing file with a newer version – Just what it says! If a file with the same name and extension exists in that folder, it will be overwritten. If I save “Sales Report.xls” at 10:00 AM on Monday, then save “Sales Report.xls” at 10:00 AM on Tuesday, only the file from Tuesday will exist.

• Do not overwrite the file if a previous version exists – Again, this is just what it says! If a file with the same name and extension exists in that folder, the file will not save. If you look at the report subscription Status, you will see the message, “A file named “ReportName” already exists in the destination folder. To continue, do one of the following: remove the existing file, specify a different name, or set overwrite options to allow the replacement of the existing file with a newer”.

• Increment file names as newer versions are added – The first time the file is saved, it is as “Report Name.doc”. The next time, it is “Report Name_1.doc”, then “Report Name_2.doc”, etc. Another way to achieve the same effect is to use the @timestamp parameter in the File Name, and set the Overwrite option to “Do not overwrite…”

Schedule the subscription by clicking Schedule Details: Here you can select hourly, daily, weekly, monthly and many more options.

After the scheduled time expires verify the status as you did in the email delivery example. Also go and verify that the report has arrived in the shared folder.

CREATING A DATA-DRIVEN SUBSCRIPTION:

A data-driven subscription provides a way to use dynamic subscription data that is retrieved from an external data source at run time. A data-driven subscription can also use static text and default values that you specify when the subscription is defined. You can use data-driven subscriptions to do the following:

• Distribute a report to a fluctuating list of subscribers. For example, you can use data-driven subscriptions to distribute a report throughout a large organization where subscribers vary from one month to the next, or use other criteria that determines group membership from an existing set of users.

• Filter the report output using report parameter values that are retrieved at run time.

• Vary report output formats and delivery options for each report delivery.

Data-driven subscriptions send out reports to not just one individual or two but to a large number of recipients whose desired format (among other details) are maintained in a database on the server. This is report delivery targeted at mass distribution. In order to work with this hands-on exercise you need to have a database of recipients ready. When the subscription is processed, the Report Server customizes the output for each of the recipients maintained on the database.

Note: To use Data Driven Subscriptions, you must have Evaluation, Developer or Enterprise version of SQL Server. This feature is not supported in Standard or Express.

Create a Subscription database in SQL Server 2008

Before you can send out the email to a number of recipients you need to have information about their email addresses in the correct format in your SQL Server database which stores subscriber information

Connect to SQL Server Management StudioCreate New database Subscribers

CREATE TABLE [dbo].[RecipientInfo]

(

[SubscriptionID] [int] NOT NULL PRIMARY KEY,

[RecipientID] [int] ,

[Email] [nvarchar] (50) NOT NULL,

[FileType] [bit],

[Format] [nvarchar] (20) NOT NULL ,

)

INSERT INTO [dbo].[RecipientInfo] (SubscriptionID, RecipientID,

Email, FileType, Format) VALUES ('101', '289', ' EmailAddress.com', '1', 'Excel')

INSERT INTO [dbo].[RecipientInfo] (SubscriptionID, RecipientID, Email, FileType, Format) VALUES ('201', '284', ' EmailAddress.com','2', 'Word')

INSERT INTO [dbo].[RecipientInfo] (SubscriptionID, RecipientID, Email, FileType, Format) VALUES ('301', '275', EmailAddress.com','3', 'PDF')

Creating the data-driven subscription by email

Open a report and go to the Subscriptions tab. Click “New Data-driven Subscription”.

Step 1

The first step is to establish a description, delivery method and data source for this subscription.

Description – provide a name, which you will see on the Subscriptions tab for the report. I usually put in something that tells me how often this subscription is run and who or where is it being delivered to.

Specify how recipients are notified – This will determine how the report is delivered.

E-mail – The report will be delivered via email.

Specify a data source that contains recipient information:

Specify a shared data source – choose a data source that exists in the Reporting Services installation. I always use this option, as it makes administration much easier.

Specify for this subscription only – you can set up a data source for this item only.

Step 2

If you chose to use a shared data source, you will see this screen, where you can navigate your folders to find your data source.

Note: - If you get an error saying could not valid then go back and uncheck the use windows credential box or check your connection and credentials.

Step 4

In this step, you will specify your email delivery information.

• To – who the email will be delivered to. Set a static email, or choose a value from your query.

• Cc – who the email will be cc’ed to. Set a static email, or choose a value from your query.

• Bcc – who the email will be bcc’ed to. Set a static email, or choose a value from your query.

• Reply to – by default, replies will go the account the reports are sent from. You can set a different email address here. Set a static email, or choose a value from your query.

• Include Report – you can set a static or dynamic option of True or False.

• Render Format – you can set a static option, get the value from your query, or choose no format.

• Priority – this sets the priority in Outlook. You can set a static option, get the value from your query, or choose no value.

• Subject – the subject line of the email. You can set a static subject, or get the value from your query.

• Comment – this will appear in the body of the email. You can set a static option, get the value from your query, or choose no value.

• Include link – determines if you want to include a link to the report in the body of the email. You can set a static option, get the value from your query, or choose no value.

There are so many creative ways to use this! Perhaps you want the report to have a Priority of High if sales are above or below a certain dollar value. The Subject could be dependent on any field in the query. Perhaps you don’t include the report and the link if certain criteria are not met.

Step 5

If your report includes parameters, you will set them here.

Step 6:

The final step is to define when the subscription will be processed.

• When the report data is updated on the report server – the subscription will be processed when the snapshot is refreshed, if the report is set up to use a snapshot.

• On a schedule created for this subscription – create a schedule for this one report subscription.

• On a shared schedule – use a pre-defined schedule, created in Site Settings.

Your data Driven Subscription got created and waits for to completion of the schedule and see the results that it should send a mail to all recipient which we stored in database.

Null Delivery Provider – The What and Why

A feature of Reporting Services that can be used to improve performance is report caching. To sum up what could be a future post of its own: caching will save a copy of the report in the ReportServerTempDB, which reduces the time it takes for a report to render for a user. This can be very useful if you have a report that takes a long time to generate, and is run frequently with no parameters or the same parameters.

When you set up a report to use caching, the cache is created the first time a user runs it. You set the cache to expire after a certain amount of time. So, say you have a report that takes 5 minutes to generate, and 10 users will run it every day, with the first one coming in at 7:00 AM. You set the report to cache a temporary copy, and expire it every day at 7:00 PM. The first user would come in and run it, and it would take 5 minutes. Every other user that runs it between the time of the first user and the expiration would notice a significant decrease in rendering time.

But, how can you help the users by reducing the time it takes the first user to run it in the morning?

The answer is to set up a report subscription using the Null Delivery Provider. This saves a copy of the report in cache at a time you specify – in this example, perhaps 6:00 AM. When the first user comes in to run the report, the rendering time is reduced.

Data-driven Subscriptions by Null Delivery Provider

Open a report and go to the Subscriptions tab. Click “New Data-driven Subscription”.

Step 1

The first step is to establish a description, delivery method and data source for this subscription.

Description – provide a name, which you will see on the Subscriptions tab for the report. I usually put in something that tells me how often this subscription is run and who or where is it being delivered to.

Specify how recipients are notified – This will determine how the report is delivered.

Choose Null Delivery Provider

Specify a data source that contains recipient information:

Specify a shared data source – choose a data source that exists in the Reporting Services installation. I always use this option, as it makes administration much easier.

Specify for this subscription only – you can set up a data source for this item only.

Step 2

If you chose to use a shared data source, you will see this screen, where you can navigate your folders to find your data source. I usually store mine in the “Data Sources” folder – that makes them easy to find!

If you choose to specify a data source for this subscription only, you will see this screen. Enter a connection string and credentials for your data source.

Step 3

In this step, you define the query that can return parameter values for you.

When using caching for a report, be aware that it works best when you have no parameters, or very few choices. Every distinct combination of parameters will create a different version of the report in cache.

I am going to set my start date, end date, and folder parameters for this report in this step.

You have the option to specify a time-out, in seconds.

You can click the Validate button to ensure the query will run against your data source.

Step 4

There are no delivery settings for Null Delivery Provider. The report is always “delivered” to ReportServerTempDB.

Step 5

If your report includes parameters, you will set them here. You can set a static value for each, or, as with the case below, choose a value from the query.

Step 6

The final step is to define when the subscription will be processed.

When the report data is updated on the report server – the subscription will be processed when the snapshot is refreshed, if the report is set up to use a snapshot.

On a schedule created for this subscription – create a schedule for this one report subscription.

On a shared schedule – use a pre-defined schedule, created in Site Settings.

One thing to keep in mind with Null Delivery is that the copy of the report in cache must be expired before you create a new copy using this method. I would suggest setting up the report cache to expire on a specific schedule, shortly before this subscription runs.

This explanation of Null Delivery Provider is just one piece of report caching. Caching can be a way to increase performance for your users, if used correctly. Consider using both caching and data-driven subscriptions to improve your environment.

Creating a Deployment Manifest

Using a Deployment Manifest in SSIS allows you to deploy a set of packages to a target location using a wizard for installing your packages. The benefit to using it is the nice user interface that a wizard provides. The disadvantage of using it is it’s lack of flexibility. When using the Deployment Manifest keep in mind that it’s an all or nothing deployment, which means you cannot choose to just deploy one or two packages at a time from your SSIS project. Using the Deployment Manifest will deploy all your packages that are in your project. Creating the manifest file is a very simple process that I will walk you through.

Creating a Deployment Manifest

With the Solution Explorer open Right-Click on the project name and click Properties

This will open the Project Properties Pages

Select the Deployment Utility from the page

Change the CreateDeploymentUtility property to True

After you’ve gone through these steps the next time you build your project it will create the file (YourProjectName).SSISDeploymentManifest. This file is located in the same folder as your packages in the bin\Deployment folder.

If you run this file it will open the Package Installation Wizard that will allow you to deploy all your packages that were located in the project to a desired location.

Wednesday, October 27, 2010

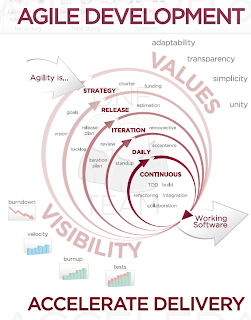

What is Agile

Twelve principles:

Twelve principles:

1. Customer satisfaction by rapid delivery of useful software

2. Welcome changing requirements, even late in development.

3. Working software is delivered frequently (weeks rather than months)

4. Working software is the principal measure of progress

5. Sustainable development, able to maintain a constant pace

6. Close, daily cooperation between businesspeople and developers.

7. Face-to-face conversation is the best form of communication (co-location)

8. Projects are built around motivated individuals, who should be trusted

9. Continuous attention to technical excellence and good design

10. Simplicity

11. Self-organizing teams

12. Regular adaptation to changing circumstances

Characteristics:

There are many specific agile development methods. Most promote development, teamwork, collaboration, and process adaptability throughout the life-cycle of the project.

Agile methods break tasks into small increments with minimal planning, and do not directly involve long-term planning. Iterations are short time frames (timeboxes) that typically last from one to four weeks. Each iteration involves a team working through a full software development cycle including planning, requirements analysis, design, coding, unit testing, and acceptance testing when a working product is demonstrated to stakeholders. This minimizes overall risk and allows the project to adapt to changes quickly. Stakeholders produce documentation as required. An iteration may not add enough functionality to warrant a market release, but the goal is to have an available release (with minimal bugs) at the end of each iteration. Multiple iterations may be required to release a product or new features.

Team composition in an agile project is usually cross-functional and self-organizing without consideration for any existing corporate hierarchy or the corporate roles of team members. Team members normally take responsibility for tasks that deliver the functionality an iteration requires. They decide individually how to meet an iteration's requirements.

Agile methods emphasize face-to-face communication over written documents when the team is all in the same location. Most agile teams work in a single open office (called a bullpen), which facilitates such communication. Team size is typically small (5- 12 people) to simplify team communication and team collaboration. Larger development efforts may be delivered by multiple teams working toward a common goal or on different parts of an effort. This may require a coordination of priorities across teams. When a team works in different locations, they maintain daily contact through videoconferencing, voice, e-mail, etc.